Naive Bayes

A quick notebook on how to create and interpret naive bayes models.

library(modeldata)

library(klaR)

library(rsample)

library(dplyr)

library(caret)Naive Bayes is a supervised machine learning algorithm based on Bayes Theorem.

Naive because it assumes all of the predictor variables to be completely independent of each other.

Bayes Rule:

-

: Conditional probability of event A occuring, given event B

-

: Probability of event A occuring

-

: Probability of event B occuring

-

: Conditional probability of event B occuring, given event A

In Naive Bayes, there are multiple predictor variables and more than output class.

The objective of a Naive Bayes algorithm is to measure the conditional probability of an event with a feature vector

Problem Statement:

Predict employee attrition

data("attrition")

attrition <- attrition %>%

mutate(JobLevel = factor(JobLevel),

StockOptionLevel = factor(StockOptionLevel),

TrainingTimesLastYear = factor(TrainingTimesLastYear))Create training (70%) and test (30%) sets and utilize reproducibility.

set.seed(123)

split <- initial_split(data = attrition, prop = 0.7, strata = "Attrition")

train <- training(split)

test <- testing(split)Distribution of Attrition rates across train and test sets.

prop.table(table(train$Attrition))

prop.table(table(test$Attrition))Notice that they are very similar.

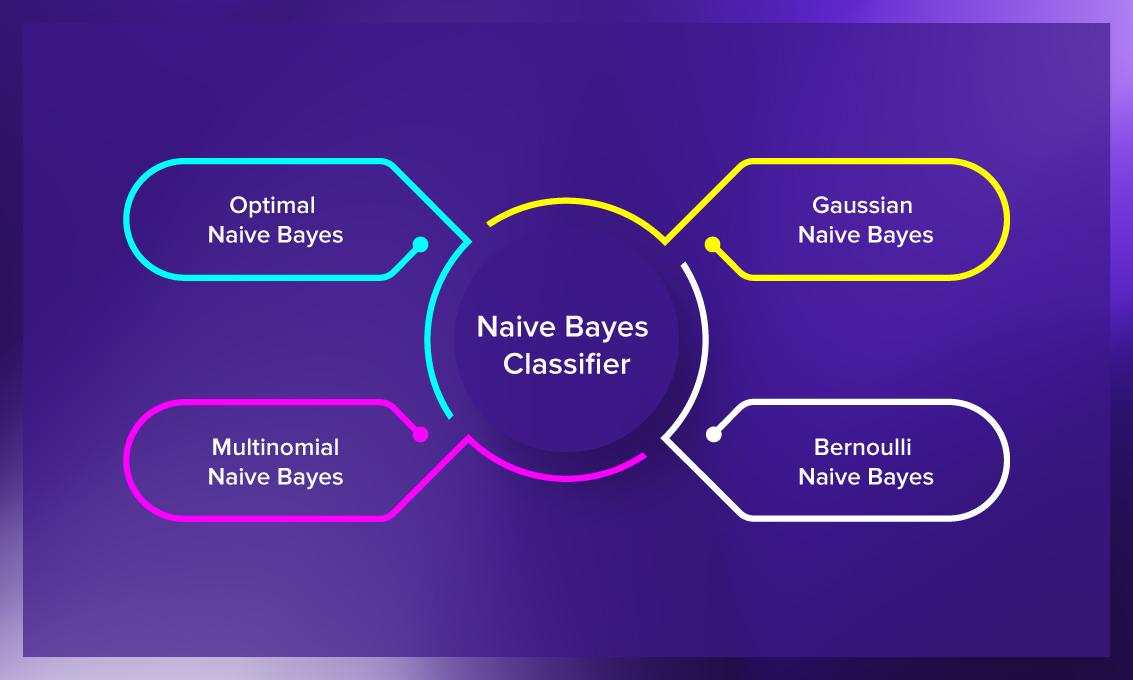

A Naive Overview

The Naive Bayes classifier is founded on Bayesian probability, which incorporates the concept of conditional probability.

In our attrition data set, we are seeking the probability of an employee belonging to attrition class given the predictor variables

A posterior:

Assumption:

The Naive Bayes classifier assumes that the predictor variables are conditionally independent of each other, with no correlation.

An assumption of normality is often used for continuous variables.

But you can see that normality assumption is not always held:

train %>%

select(Age, DailyRate, DistanceFromHome, HourlyRate, MonthlyIncome, MonthlyRate) %>%

gather(metric, value) %>%

ggplot(aes(value, fill = metric)) +

geom_density(show.legend = FALSE) +

facet_wrap(~ metric, scales = "free")Advantages and Shortcoming

The Naive Bayes classifier is simple, fast, and scales well to large .

A major disadvantage is that it relies on the often-wrong assumption of equally important and independent features.

Implementation

We will utilize the caret package in R.

- Create response and feature data

features <- setdiff(names(train), "Attrition")

x <- train[, features]

y <- train$AttritionInitialize 10-fold cross validation using caret's trainControl function.

trControl <- trainControl(method = "cv", number = 10)Training the Naive Bayes model:

nb.fit <- train(

x = x,

y = y,

method = "nb",

trControl = trControl

)Confusion Matrix to analyze our results:

confusionMatrix(nb.fit)plot(nb.fit)To test the accuracy on our test data set:

preds <- predict(nb.fit, newdata = test)confusionMatrix(preds, reference = test$Attrition)